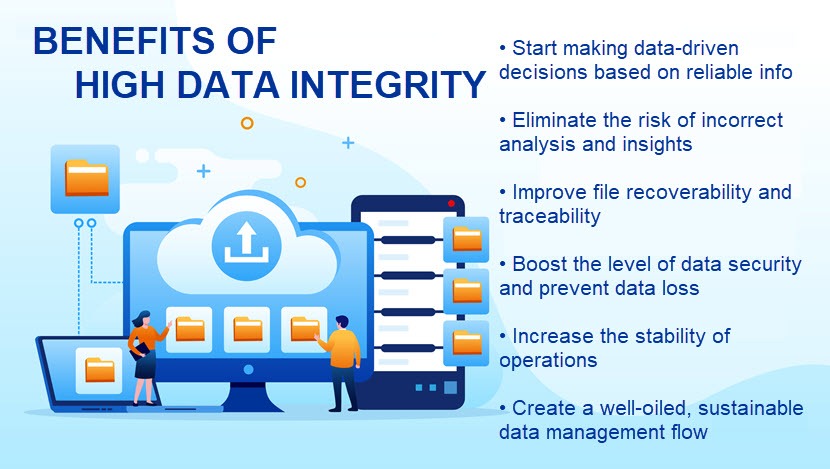

When a company makes decisions based on unreliable data, incorrect insights can seriously impact the bottom line. You cannot make informed decisions on end users and products without correct info, which is why maintaining high levels of data integrity should be your top priority.

This article is an intro to data integrity and the value of keeping files clean, reliable, and accurate. Read on to learn what data integrity is and see how data-driven organizations ensure files stay healthy at all stages of the data lifecycle.

Data Integrity Definition

Data integrity refers to the characteristics that determine data reliability and consistency over a file's entire lifecycle (capture, storage, retrieval, update, backup, transfer, etc.). No matter how many times someone edits or copies a file, a piece of data with integrity will not have any unintended changes.

As a term, data integrity is broad in scope and can have different meanings depending on the context. The phrase can describe:

- The state of data (e.g., valid or invalid).

- Processes of ensuring and preserving the validity of data (e.g., error checking or file validation).

Data integrity should be a critical aspect of any system that collects, stores, processes, or retrieves data. A company typically enforces integrity through various rules and procedures around data interactions (deletion, insertion, editing, updating, etc.).

The main goal of data integrity is to prevent any unintentional changes to business files (either malicious or accidental). A piece of data with integrity should have the following characteristics:

- Attributable (a company should know how and when it created or obtained data).

- Traceable (the team must know what happened to the file throughout its entire lifecycle).

- Original (there are no unnecessary copies of the file).

- Accurate (all contained info is correct and error-free).

- Legible (the file is complete and has well-defined attributes that enable consistency with other data).

In some designs, data integrity can also refer to data safety regarding regulatory compliance, most typically in terms of GDPR.

Learn the difference between CCPA and GDPR, two similar regulations that enforce data privacy and integrity in different ways (and geographic locations).

Data Integrity vs. Data Quality

The goal of data quality (or data accuracy) is to guarantee the accuracy of files. File quality aims to ensure info is correct and that the files stored in a database are compliant with the company's standards and needs.

A company can evaluate data quality via various processes that measure data's reliability and accuracy. Some key metrics of data quality are:

- Completeness (an indication of the comprehensiveness of data based on specific variables and business rules).

- Uniqueness (a measure of duplication of items within a data set or in comparison with another database).

- Validity (an extent of alignment to the defined business rules and requirements).

- Timeliness (whether data is up-to-date and available within an acceptable time frame).

- Accuracy (how correct the data item describes the object).

- Consistency (a measure of the absence of differences between the data items representing the same objects).

There is a lot of overlap between data integrity and quality. Integrity also requires complete and accurate files, but simply having high-quality data does not guarantee that an organization will find it helpful.

For example, a company may have a database of user names and addresses that is both valid and up to date. However, that database does not have any value if you do not also have the supporting data that gives context about end-users and their relationship with the company.

Data Integrity vs. Data Security

Whereas data integrity aims to keep files useful and reliable, data security protects valuable info from unauthorized access. Data security is a fundamental subset of integrity as it is impossible to have high levels of reliability without top-tier protection.

Companies rely on various techniques to protect files from external and insider threats. Common strategies include:

- Strict identity and access management.

- Network segmentation.

- Data backups.

- At-rest encryption.

- Threat identification systems (namely intrusion detection systems).

- Various disaster recovery capabilities.

Security is vital to integrity. Data security boosts integrity by protecting files from threats, maintaining privacy, and ensuring no one can compromise valuable info.

If you wish to improve your data security, your team should learn about the two most common ways someone compromises business files: data breaches and data leaks.

Why Is Data Integrity Important?

For most companies, compromised data is of no use. For example, if someone alters your sales data and there is no record of why the edit happened or who changed the file, there is no way of knowing whether you can trust that data. All the decisions you make based on that file will not come from reliable info, and you can easily make costly mistakes in terms of:

- Predicting customer behavior.

- Assessing market activity and needs.

- Evaluating expansion opportunities.

- Adjusting sales strategies.

Not having reliable data can severely impact your business performance. According to a recent McKinsey study, data-based decision-making is how top organizations rule their markets. A data-driven company basing moves on reliable data is:

- Around 23 times more likely to outperform competitors in customer acquisition.

- Over nine times more likely to retain users.

- Up to 19 times more profitable than the nearest competitor.

Unfortunately, most senior executives do not have a high level of trust in how their organization uses data. A recent study by KPMG International reveals the following numbers:

- Only 35% of C+ executives say they have a high level of trust in the way their company uses data and analytics.

- Over 92% of decision-makers are concerned about the negative impact of data and analytics on an organization's reputation.

Maintaining high levels of data integrity starts with a reliable infrastructure. PhoenixNAP's Bare Metal Cloud is an ideal hosting option if you wish to boost integrity through various automation features and top-tier data security.

Types of Data Integrity

Maintaining high levels of reliability requires an understanding of the two different types of data integrity: physical and logical integrity.

Physical Data Integrity

Physical integrity refers to processes that ensure systems and users correctly store and fetch files. Some of the challenges of this type of data integrity can include:

- Various human error-caused issues.

- Electromechanical faults.

- Design flaws.

- Power outages.

- Natural disasters.

- Extreme temperatures.

- A hacker disrupting a database (e.g., with a DDoS attack or an SQL injection).

- Material fatigue and corrosion.

- Various types of cybersecurity attacks.

Some of the most common methods a company can ensure high levels of physical integrity are:

- Setting up redundant hardware.

- Using a clustered file system.

- Relying on error-correcting memory.

- Deploying an uninterruptible power supply.

- Using certain types of RAID arrays.

- Using a watchdog timer on critical subsystems.

- Relying on error-correcting codes.

Typically, data centers are the facilities that guarantee the highest levels of physical data integrity. Our article on data center security explains why.

Logical Integrity

Logical integrity is concerned with the correctness of a piece of data within a particular context. Common challenges of logical integrity are:

- Human errors.

- Software bugs.

- Design flaws.

Standard methods of ensuring high levels of logical integrity include:

- Check constraints.

- Foreign key constraints.

- Program assertions.

- Run-time sanity checks.

Logical integrity has three subsets when dealing with relational databases:

- Entity integrity: Entity integrity uses primary keys (unique values that identify a piece of data) to ensure tables have no duplicate content or null-value fields.

- Referential integrity: This type of data integrity refers to processes that use the concept of foreign keys to control changes, additions, and deletions of data.

- Domain integrity: Domain integrity ensures the accuracy of each piece of data in a domain (a domain is a set of acceptable values that a column can and cannot contain, such as a column that can only have numbers).

In addition to the three subsets, some experts also classify user-defined integrity. This subcategory refers to custom rules and constraints that fit business needs but do not fall under entity, referential, or domain integrity.

Data Integrity Risks

Various factors can affect the integrity of business data. Some of the most common risks include:

- Human error: Users and employees are the most significant risk factor for data integrity. Typing in the wrong number, incorrectly editing data, duplicating files, and accidentally deleting info are typical mistakes that jeopardize integrity.

- Hardware-related issues: Sudden server crashes and compromised IT components can lead to the incorrect or incomplete rendering of data. These issues can also limit access to data.

- Inconsistencies across formats: The lack of consistency between formats can also impact data integrity (for example, a set of data in an Excel spreadsheet that relies on cell referencing may not be accurate in a different format that does not support those cell types).

- Transfer errors: A transfer error occurs when a piece of data cannot successfully transfer from one location in a database to another.

- Security failures: A security bug can easily compromise data integrity. For example, a mistake in a firewall can allow unauthorized access to data, or a bug in the backup protocol could delete specific images.

- Malicious actors: Spyware, malware, and viruses are serious data integrity threats. If a malicious program invades a computer, a third party can start altering, deleting, or stealing data.

Non-compliance with data laws can also cause serious integrity concerns. Failing to comply with regulations such as HIPAA and PCI will also lead to hefty fines.

Examples of Data Integrity Violations

Below are some real-life scenarios in which a company can compromise file integrity:

- Someone at the company accidentally tries to insert the data into the wrong table.

- The network goes down while someone is transferring data between two databases.

- An employee enters a date outside an acceptable range.

- An end-user enters a phone number in the wrong format.

- An app bug attempts to delete the wrong file.

- A user deletes a record in a table that another database is referencing.

- Hackers manage to steal all user passwords from a poorly protected database.

- A fire sweeps through the data center and burns a computer storing a valuable database.

- The regular database backups have been failing for the past month without alerting the security team.

- A hacker breaches a database and uses ransomware to encrypt sensitive data.

You can learn more about ransomware by reading these articles:

- Ransomware Examples

- How to Prevent Ransomware: 18 Best Practices

- Linux Ransomware: Famous Attacks and How to Protect Your System

- Terrifying Ransomware Statistics & Facts

If you wish to protect your company from this cyber threat, pNAP's ransomware protection can keep you safe with a mix of immutable backups and robust disaster recovery.

How to Ensure Data Integrity

Below is a list of recommendations and best practices you can rely on to improve data integrity in your organization.

Understand Your Data's Lifecycle

You must know everything about your data to take complete control of its integrity. Start by answering the following questions:

- What data does your company store and why?

- How does the company collect data?

- Are different types of data logically separated?

- Where does your info come from?

- How do teams analyze and consume data?

- Who creates valuable files?

- Who has access to sensitive files?

- Which employees can modify data?

- What is the company's process for deleting expired data?

You should also account for any relevant regulations (GDPR, CCPA, HIPAA, etc.) at this stage. Only once you know what data your company collects and how staff members handle files will you be ready to start improving overall integrity.

Create an Audit Trail

An audit trail keeps a record of every interaction a piece of data has during its lifecycle. An audit records every time a user transfers or uses a file, so you will have high levels of visibility. A typical end-to-end trail should have the following characteristics:

- Automatic generation.

- Immutability that prevents tampering.

- The ability to track and record every event (access, create, delete, modify, etc.).

- Time-stamping of every event.

- The capability to align events with individual user accounts.

If you suffer a breach or run into a data bottleneck, an audit trail will help track down the source of the problem and speed up recovery time.

Strict Access Controls

Keeping unauthorized individuals away from sensitive files is vital to integrity. You should:

- Map all employees and systems to understand who has access to what files.

- Use two-factor authentication (2FA) when verifying users.

- Grant access rights on a need-to-know and need-to-use basis.

- Use a tried-and-tested authentication protocol, such as Kerberos.

Learn about zero-trust security, a security model of least privilege in which no user or employee has access to sensitive data by default.

Use Error Detection Software

An error detection software helps monitor data integrity automatically. These programs help by:

- Isolating outlines.

- Reducing the likelihood of accidental errors.

- Assisting employees in maintaining data hygiene.

- Enforcing data editing and management rules.

- Identifying causes behind mistakes.

- Recommending steps for avoiding errors in the future.

You can also use anomaly detection services to keep data integrity risks at a manageable level.

Identify and Eliminate Security Vulnerabilities

Looking for and proactively removing security weaknesses is crucial to maintaining high levels of file integrity. Depending on your budget and the team's skill set, you can search for vulnerabilities either on an in-house level or hire an external team of security professionals.

Read our article on vulnerability assessments to learn how the pros evaluate a system for weaknesses. You can also take the analysis a step further and organize a penetration test to see how the system responds to real-life breach attempts.

Use Validation

Planning, mapping, and dictating how the company uses data is vital, but you should also use validation to ensure staff members follow instructions. You should deploy programs (or maybe even staff members) that regularly test, validate, and revalidate if IT systems and personnel operate according to business-wide procedures.

You should also use input validation whenever a known or an unknown source supplies your data set (an end-user, app, employee, etc.).

Communicate the Value of Data Integrity

Educating your personnel about info integrity is as vital as enforcing how they handle data. Employees should know how to:

- Properly use, store, retrieve, and edit data.

- Recognize and counter potential threats to data integrity.

- Report irresponsible behavior towards business data.

- Find all instructions and guides on proper file management.

If you are organizing a training session for your employees, our article on security awareness training offers valuable tips and tricks to get the most out of the program.

Search for and Remove Duplicate Data

You need to clean up stray data and remove unnecessary duplicates of sensitive files. Stray copies can easily find a home on a document, spreadsheet, email, or a shared folder where someone without proper access rights can see it.

While you can task humans to look for and delete duplicate data, a much safer long-term bet is to rely on a tool that can clean up data automatically both on-prem and in the cloud.

Have Backups of Sensitive Data

You should use backups to preserve integrity in all scenarios. Backing up files helps prevent data loss and, if you use an immutable backup, you can safely store data in its original state. That way, no amount of edits or attempts to delete a file can lead to permanent data loss.

PhoenixNAP's backup and restore solutions help guarantee data availability through custom cloud backups and immutable storage solutions.

Improve Integrity and Boost Your Decision-Making

Companies that know how to maintain high levels of integrity thrive in today's market, while those that cannot correctly manage info often lose a vital competitive edge. Improve your levels of data integrity to start making confident, data-driven decisions that steer your company in the right direction.