The mention of Vagrant in the title might have led you to believe that this is yet another article about the power of sharing application environments. As one does with code or how Vagrant is a great facilitator for that approach. However, there exists plenty of content about that topic, and by now the benefits of it are widely known. Instead, we will describe our experience in putting Vagrant to use in a somewhat unusual way.

A Novel Idea

The idea is to extend a developer workstation running Windows to support running a Linux kernel in a VM and to make the bridge between the two as seamless as possible. Our motivation was to eliminate certain pain points or restrictions in development. Which are brought about by the choice of OS for the developer’s local workstation. Be it a requirement at an organizational level, regulatory enforcement or any other thing that might or might not be under the developer’s control.

This approach is not the only one evaluated, as we also considered shifting work entirely to a guest OS on a VM, using Docker containers, leveraging Cygwin. And yes, the possibility of replacing the host OS was also challenged. However, we found that the way technologies came together in this approach can be quite powerful.

We’ll take this opportunity to communicate some of the lessons learned and limitations of the approach and share some ideas of how certain problems can be solved.

Why Vagrant?

The problem that we were trying to solve and the concept of how we tried to do it does not necessarily depend on Vagrant. In fact, the idea was based on having a virtual machine (VM) deployed on a local hypervisor. Running the VM locally might seem dubious at first thought. However, as we found out, this gives us certain advantages that allow us to create a better experience for the developer by creating an extension to the workstation.

We opted to go for VirtualBox as a virtualization provider primarily because of our familiarity with the tool and this is where Vagrant comes into play. Vagrant is one of the tools that make up the open-source HashiCorp Suite, which is aimed at solving the different challenges in automating infrastructure provisioning.

In particular, Vagrant is concerned with managing VM environments in the development phase Note, for production environments there are other tools in the same suite that are more suitable for the job. More specifically Terraform and Packer, which are based on configuration as code. This implies that an environment can be easily shared between team members and changes are version controlled and can be tracked easily. Making the resultant product (the environment) consistently repeatable. Vagrant is opinionated and therefore declaring an environment and its configuration becomes concise, which makes it easy to write and understand.

Read more about the effects of VMs on development in our blog post DevOps and Virtualization.

Why Ansible?

After settling on using Vagrant for our solution and enjoying the automated production of the VM; the next step was to find a way to provision that VM in a way that marries the principles advertised by Vagrant.

We do not recommend having Vagrant spinning up the VMs in an environment and then manually installing and configuring the dependencies for your system. In Vagrant, provisioners are core and there are plenty from which you can choose. In our case, as long as our provisioning remained simple we stuck with using Shell (Vagrant simply uploads scripts to the guest OS and executes them).

Soon after, it became obvious that that approach would not scale well, alongside the scripts being too verbose. The biggest pain point was that developers would need to write in a way that favored idempotency. This is due to the common occurrence of needing to add steps to the configuration. All the while being overkill to have to re-provision everything from scratch.

At this point, we decided to use Ansible. Ansible by RedHat is another open-source automation tool that is built around the idea of managing the execution of plays. Using a playbook where a play can be thought of as a list of tasks mapped against a group of hosts in an environment.

These plays should ideally be idempotent which is not always possible. And again the entire configuration one would write is declared as code in YAML. The biggest win that was achieved with this strategy is that the heavy lifting is done by the community. It provides Ansible Modules, configurable Python scripts that perform specific tasks, for virtually anything one might want to do. Installing dependencies and configuring the guest according to industry standards becomes very easy and concise. Without requiring the developer to go into the nitty-gritty details since modules are in general highly opinionated. All of these concepts combine perfectly with the principles for Vagrant and integration between the two works like a charm.

There was one major challenge to overcome in setting up the two to work together. Our host machine runs Windows, and although Ansible is adding more support for managing Windows targets with time, it simply does not run from a Windows control machine. This leaves us with two options: having a further environment which can act as the Ansible controller or the simpler approach of having the guest VM running Ansible to provision itself.

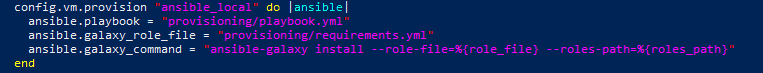

The drawback of this approach is that one would be polluting the target environment. We were willing to compromise on this as the alternative was cumbersome. Vagrant allows you to achieve this by simply replacing the provisioner identifier. Changing from ansible to ansible_local, it automatically installs the required Ansible binaries and dependencies on the guest for you to use.

File Sharing

One of the cornerstones we wanted to achieve was the possibility to make the local workspace available from within the guest OS. This is so you can have the tooling which makes up a working environment be readily available to easily run builds inside the guest. The options for solving this problem are plenty and they vary depending on the use case. The simplest approach is to rely on VirtualBox`s file-sharing functionality which gives near-instant, two-way syncing. And setting it up is a one-liner in the VagrantFile.

The main objective here was to share code repositories with the guest. It can also come handy to replicate configuration for some of the other toolings. For instance, one might find it useful to configure file sharing for Maven`s user settings file, the entire local repository, local certificates for authentication, etc.

Port Forwarding

VirtualBox`s networking options were a powerful ally for us. There are a number of options for creating private networks (when you have more than one VM) or exposing the VM on the same network as the host. It was sufficient for us to rely on a host-only network (i.e. the VM is reachable only from the host). And then have a number of ports configured for forwarding through simple NAT.

The major benefit of this is that you do not need to keep changing configuration for software, whether it is executing locally or inside the guest. All of this can be achieved in Vagrant by writing one line of configuration code. This NATting can be configured in either direction (host to guest or guest to host).

Bringing it together

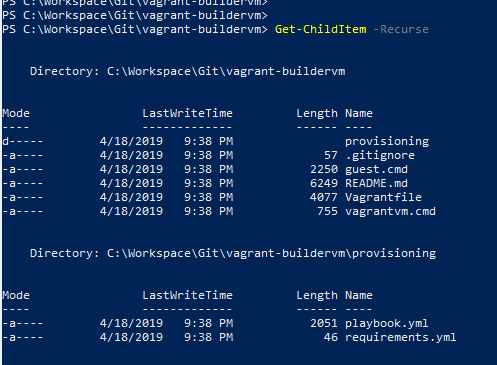

Having defined the foundation for our solution, let’s now briefly go through what we needed to implement all of this. You will see that for the most part, it requires minimal configuration to reach our target.

The first part of the puzzle is the Vagrantfile in which we define the base image for the guest OS (we went with CentOS 7). The resources we want to allocate (memory, vcpus, storage), file shares, networking details and provisioning.

Note that the vagrant plugin `vagrant-vbguest` was useful to automatically determine the appropriate version of VirtualBox’s Guest Addition binaries for the specified guest OS and installing them. We also opted to configure Vagrant to prefer using the binaries that are bundled within itself for functionality such as SSH (VAGRANT_PREFER_SYSTEM_BIN set to 0) rather than rely on the software already installed on the host. We found that this allowed for a simpler and more repeatable setup process.

The second major part of the work was integrating Ansible to provision the VM. For this we opted to leverage Vagrant’s ansible_local that works by installing Ansible in the guest on the fly and running provisioning locally.

Now, all that is required is to provide an Ansible playbook.yml file and here one would define any particular configuration or software that needs to be set up on the guest OS.

We went a step further and leveraged third-party Ansible roles instead of reinventing the wheel and having to deal with the development and ongoing maintenance costs.

The Ansible Galaxy is an online repository of such roles that are made available by the community. And you install these by means of the ansible-galaxy command.

Since Vagrant is abstracting away the installation and invocation of Ansible, we need to rely on Vagrant. Why? To make sure that these roles are installed and made available when executing the playbook. This is achieved through the galaxy_command parameter. The most elegant way to achieve this is to provide a requirements.yml file with the list of roles needed and have it passed to the ansible-galaxy command. Finally, we need to make sure that the Ansible files are made available to the guest OS through a file share (by default the directory of the VagrantFile is shared) and that the paths to them are relative to /vagrant.

Building a seamless experience…BAT to the rescue

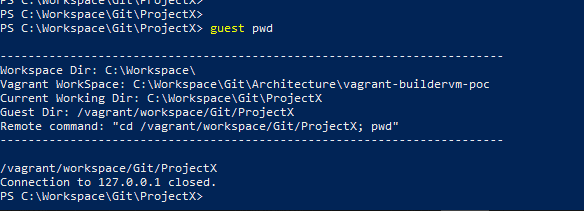

We were pursuing a solution that makes it as easy as possible to jump from working locally to working inside the VM. If possible, we also wanted to be able to make this switch without having to move through different windows.

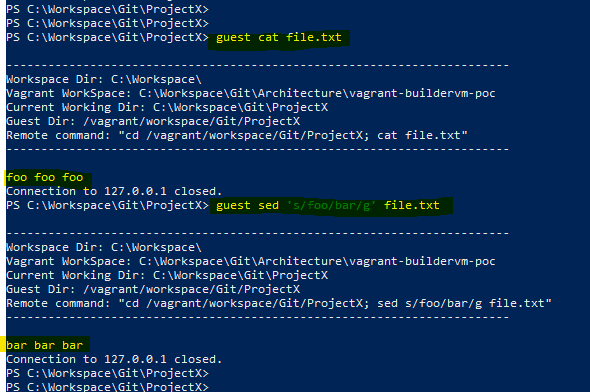

For this reason, we wrote a couple of utility batch scripts that made the process much easier. We wanted to leverage the fact that our entire workspace directory was synced with the guest VM. This allowed us to infer the path in the workspace on the guest from the current location in the host. For example, if on our host we are at C:WorkspaceProjectX and the workspace is mapped to vagrantworkspace, then we wanted the ability to easily run a command in vagrantworkspaceprojectx without having to jump through hoops.

To do this we placed a script on our path that would take a command and execute it in the appropriate directory using Vagrant’s command flag. The great thing about this trick is that it allowed us to trigger builds on the guest with Maven through the IDE by specifying a custom build command.

We also added the ability to the same script to SSH into the VM directly in the path corresponding to the current location on the host. To do this, on VM provisioning we set up a file share that allows us to sync the bashrc directory in the vagrant user’s home folder. This allows us to cd in the desired path (which is derived on the fly) on the guest upon login.

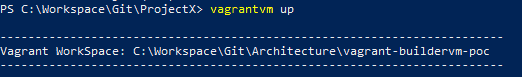

Finally, since a good developer is an efficient developer, we also wanted the ability to manage the VM from anywhere. So if, for instance, we have not yet launched the VM we would not need to keep navigating to the directory hosting the VagrantFile.

This is standard Vagrant functionality that is made possible by setting the %VAGRANT_CWD% variable. What we added on top is the ability to define it permanently in a dedicated user variable. And simply set it up only when we wanted to manage this particular environment.

File I/O performance

In the course of testing out the solution, we encountered a few limitations that we think are relevant to mention.

The problems revolved around the file-sharing mechanism. Although there are a number of options available, the approach might not be a fit for certain situations that require intensive File I/O. We first tried to set up a plain VirtualBox file share and this was a good starting point since it works. And without requiring many configurations, it syncs 2-ways instantaneously, which is great in most cases.

The first wall was hit as soon as we tried running a FrontEnd build using NPM which relies on creating soft-links for common dependency packages. Soft-linking requires a specific privilege to be granted on the Windows host and still, it does not work very well. We tried going around the issue by using RSync which by default only syncs changes in one direction and runs on demand. Again, there are ways to make it poll for changes and bi-directionality could theoretically be set up by configuring each direction separately.

However, this creates a race-condition with the risk of having changes reversed or data loss. Another option, SMB shares, required a bit more work to set up and ultimately was not performant enough for our needs.

In the end, we found a solution to make the NPM build run without using soft-links and this allowed us to revert to using the native VirtualBox file share. The first caveat was that this required changes in our source-code repository, which is not ideal. Also, due to the huge number of dependencies involved in one of our typical NPM-based FrontEnd builds, the intense use of File I/O was causing locks on the file share, slowing down performance.

Conclusions

The aim was to extend a workstation running Windows by also running a Linux Kernel, to make it as easy as possible to manage and switch between working in either environment. The end result from our efforts turned out to be a very convenient solution in certain situations.

Our setup was particularly helpful when you need to run applications in an environment that is similar to production. Or when you want to run certain tooling for development, which is easier to install and configure on a Linux host. We have shown you how, with the help of tools like Vagrant and Ansible, it is easy to create a setup in such a way that can be shared and recreated consistently. Whilst keeping the configuration concise.

From a performance point of view, the solution worked very well for tasks that were demanding from a computation perspective. However, not the same can be said for situations that required intensive File I/O due to the overhead in synchronization.

For more knowledge-based information, check out what our experts have to say. Bookmark the site to stay updated weekly.